For the last several years, ONF has been working with network operators to transform their access networks from using closed and proprietary hardware appliances to being able to take advantage of software running on white-box servers, switches, and access devices.

This transformation is partly motivated by the CAPEX savings that come from replacing purpose-built appliances with commodity hardware, but it is mostly driven by the need to increase feature velocity through the softwartization of the access network. The goal is to enable new classes of edge services—e.g., Public Safety, Internet-of-Things (IoT), Immersive User Interfaces, Automated Vehicles—that benefit from low latency connectivity to end users (and their devices). This is all part of the growing trend to move functionality to the network edge.

The first step in this transformation has been to disaggregate and virtualize the legacy hardware appliances that have historically been used to build access networks. This is done by applying SDN principles (decoupling the network control and data planes) and by breaking monolithic applications into a set of microservices. This disaggregation (and associated refactoring) is by its nature an ongoing process, but there has been enough progress to warrant early-stage field trials in major operator networks.

But it is the second step in this transformation—integrating the resulting disaggregated components back into a functioning system ready to be deployed in a production environment—that is proving to be the biggest obstacle to fully realizing the full potential of softwarization. In short, network operators face a disruptor’s dilemma:

- Disaggregation catalyzes innovation. This is the value proposition of open networking.

- Integration facilitates adoption. This is a key requirement for any operational deployment.

Network operators’ instincts in addressing this problem are to turn to large integration teams that are tasked to engineer the solution so it can be retrofitted in their particular environment. The problem is the widespread perception that each operational environment is so unique that any solution deployed in it must be equally unique, which, unfortunately (and ironically) results in a point-solution that is difficult to evolve and not particularly open to ongoing innovation. Ideally, you want to keep components free of local modifications so you can consume future releases from upstream sources without having to repeat the integration effort. Augmenting an open source solution with customizations that generate value is one thing, but the brittle nature of retrofitting puts network operators risk of trading away the value of disaggregation (which enables feature velocity) to regain the operational familiarity and stability of fixed/point solutions.

But is such an outcome preordained? Is there a technical approach to operationalizing a collection of disaggregated components in a way that sustains the innovate/deploy/operate cycle? We believe there is a technical solution to this problem, one that automatically constructs a solution from a set of disaggregated components and integrates that solution into different deployment environments.

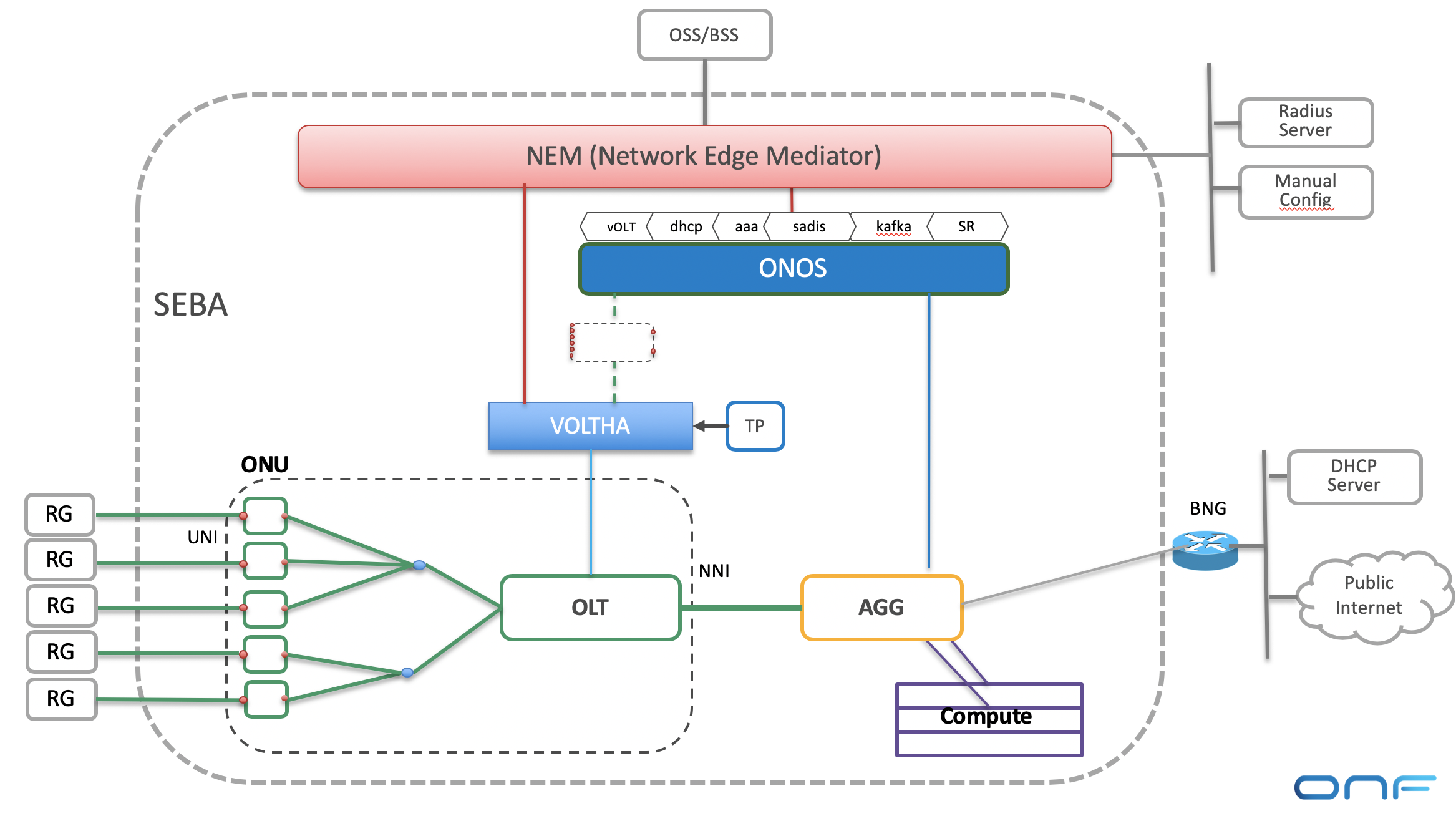

The starting point is the plethora of CI/CD tools that have proven useful in building cloud services—everything from Kubernetes to Helm to Jenkins—but they are not sufficient because they do not directly address the variability of deployment environments or operator business logic. The CORD project demonstrates how to factor the operator’s operational and business requirements into an integrated solution in an automated and repeatable way. The key is NEM (Network Edge Mediator), which plays two important roles.

First, NEM assembles a unified solution. Each operator wants a different subset of the available disaggregated components, which NEM allows them to specify as a configuration-time profile. In the case of SDN-Enabled Broadband Access (SEBA), for example, some operators want BNG to be internal to the solution and some want it to be external, and when internal to the solution, some want the BNG implemented in containers and others want it implemented in the switching fabric. NEM assembles the collection of components specified in a declarative profile into a self-contained whole by generating the necessary “glue” code. It presents a coherent Northbound Interface to the operator’s OSS/BSS, and it manages any state that needs to be shared among the components. This avoids hardwiring dependencies into each component.

Second, NEM explicitly manages environment dependencies by allowing operators to define a runtime-time workflow. Returning to the SEBA example, the workflow specifies how the integrated solution interacts with the surrounding operational environment, as individual subscribers are managed throughout their lifecycle (e.g., coming online/offline). The workflow specifies all aspects of what happens as ONUs are detected and approved, EAPoL packets arrive and subscribers are authenticated, DHCP requests are received and IP addresses assigned, and finally, packets start flowing. Different workflows can be defined for different operators, yet reuse all the same components without modification.

Nothing comes for free. Component developers need to program for reuse, which means not hardwiring dependencies on other components, but instead getting their environment-specific configuration parameters from NEM. Similarly, network operators need to specify the profiles and workflows that satisfy their particular operating and business requirements. But by centralizing this information in NEM’s declarative data model, it is possible for operators to break the disruptor’s dilemma, and deploy integrated solutions built from disaggregated components, thereby benefiting from a fully agile innovate/deploy/operate cycle.

Larry Peterson

CTO, ONF